9.6: Conditional Random Fields

- Page ID

- 40971

Conditional Random Fields, CRFs, are an alternative to HMMs. Being a discriminative approach, this type of model doesnt take into account the joint distribution of everything, as does a poorly scaling HMM. The hidden states in a CRF are conditioned on the input sequence. (See Figure 9.8)3

Figure 9.8: Conditional random fields: a discriminative approach conditioned on the input sequence

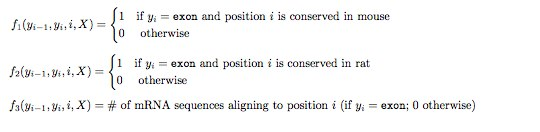

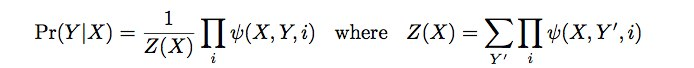

A feature function is like a score, returning a real-valued number as a function of its inputs that reflects the evidence for a label at a particular position. (See Figure 9.9) The conditional probability of the emitted sequence is its score divided by the total score of the hidden state. (See Figure 9.10)

Each feature function is weighted, so that during the training, the weights can be set accordingly.

The feature functions can incorporate vast amounts of evidence without the Naive Bayes assumption of independence, making them both scalable and accurate. However, training is much more difficult with CRFs than HMMs.

3Conditional Random Field. Wikipedia. http://en.Wikipedia.org/wiki/Conditional random field